A 40-year-old non-smoker in Delhi faces a measurable probability of dying in the next year. If the 40 year old is a woman, she will have a slightly better chance at life than a male counterpart. If she lives in a wealthy area, her chances are once again better than another woman living in a less privileged location.123

How do we know this? We know this because actuaries work with mortality and health data from millions of people, and build tables that segment risk by age, gender, smoking status, income, and even geography, to price policies accurately.4

Types of risk

Over time, experts have classified risk into different types. Here’s a table about the different types of risk:

| RISK TYPE | DEFINITION | CHARACTERISTICS | EXAMPLES |

|---|---|---|---|

| HAZARD RISK (Pure Risk)56 | The possibility of loss from natural events or accidents. The oldest, most intuitive kind of risk. | • Unintended—nobody wants them • Objective frequency data—insurers have centuries of records • Insurable—probability and consequence can be estimated from historical data • Cannot create profit—only causes loss | • Fire and property damage • Windstorms and hail • Theft and burglary • Flooding • Liability from personal injury |

| OPERATIONAL RISK78910 | The risk that your business’s internal machinery breaks down. Unlike hazard risk, it’s inherent to doing business—you can’t eliminate it, only manage it. Also cannot be diversified away. Defined by Basel II as: “Risk of loss from inadequate or failed internal processes, people and systems, or external events.” | • Inherent to operations—impossible to eliminate • Non-diversifiable—all firms in an industry face similar operational risks • Hard to quantify—driven by control quality and governance, which are difficult to measure • Multiple sources—spans people, processes, systems, and external events | Process Failures: Accountant enters data incorrectly, leading to wrong financial statements; Wrong calculation of tax liabilities Human Error: Surgeon operates on wrong patient; Employee sends confidential email to wrong recipient; Trader executes wrong order System Failures: Bank’s payment system crashes; Company’s website goes down during peak shopping season; Database corruption losing customer data Fraud: Employee embezzles funds; Vendor submits fake invoices; Internal collusion to bypass controls External Events: Natural disaster destroys office; Key supplier suddenly defaults; Cyberattack from external actor |

| FINANCIAL RISK111213 | Risk from changes in financial variables: credit defaults, price movements, or inability to access funds. Encompasses three subcategories. | • Market-driven—determined by supply and demand in public markets • Observable prices—interest rates, bond spreads, stock prices are public • High correlation—multiple financial risks often move together during crises | Credit Risk: Borrower fails to repay loan; Bank faces default Market Risk (Interest Rate, Equity, Currency, Commodity): Interest rates rise, bond portfolio value falls; Stock prices decline; Rupee weakens against dollar; Oil prices spike increasing business costs Liquidity Risk (Asset & Funding): Cannot sell asset when needed (asset liquidity); Cannot raise cash when obligations due (funding liquidity) |

| STRATEGIC RISK14 | Risk that your business strategy is wrong. Risk from strategic decisions and competitive threats that can derail long-term objectives. Highest impact, but low frequency. | • High impact, low frequency—rare but potentially catastrophic • Long-term consequences—effects persist for years • Cross-functional impact—affects entire organization • Forward-looking—requires anticipating future changes • Not quantifiable—each situation is somewhat unique | Poor Strategy Decisions: Entering unviable new markets; Expanding too quickly into new industries; Pricing strategy that’s unprofitable Competitive Threats: New disruptive competitor; Competitor’s aggressive pricing; Merger of competitors Technological Disruption: Emerging technology makes business model obsolete (e.g., ride-sharing disrupting taxis); Failed innovation or delayed product launches Resource Misalignment: Allocating resources to declining products instead of growth opportunities Market/Industry Changes: Shift in customer needs and expectations; Regulatory changes forcing business model changes |

| COMPLIANCE & REGULATORY RISK15 | The risk that you violate laws, regulations, or internal policies, resulting in fines, legal action, or reputational damage. The regulatory environment is constantly changing. | • Pervasive—affects all areas of organization • Constantly evolving—new regulations, changing requirements • Penalties escalating—fines and enforcement becoming more severe • Jurisdiction-dependent—different rules in different countries • Partly controllable—you can strengthen controls, but regulatory changes are external | Financial Crimes: Money laundering violations; Bribery and corruption; Sanctions violations Data & Privacy: GDPR violations (Europe); CCPA violations (California); HIPAA violations (healthcare); Customer data breaches Contract & Market Conduct: False advertising; Market manipulation; Insider trading; Misleading disclosures Employment & Safety: Labor law violations; Health and safety violations; Harassment and discrimination Industry-Specific: Healthcare regulations (HIPAA); Financial regulations (Banking Acts); Environmental regulations |

| REPUTATIONAL RISK1617 | The risk that negative publicity damages your brand, eroding customer trust, investor confidence, investor perception, or ability to attract talent. One of the hardest risks to quantify. | • Hidden until it happens—not visible in normal operations • Disproportionate impact—market values reputation more than the direct financial loss • Self-inflicted worse than external—fraud damages reputation 2x more than accidents • Long recovery time—trust takes years to rebuild • Interconnected—affects customer base, employees, investors, partners simultaneously | Product/Service Failures: Volkswagen emissions scandal (2015): $30B+ in losses, brand destroyed, took years to recover; Boeing 737 MAX crashes: customer confidence shattered; Product recalls damaging trust Ethical/Fraud Issues: Wells Fargo account scandal: reputation destroyed despite being largest bank; Facebook/Meta privacy scandals: customer trust eroded Workplace Issues: Harassment scandals; Discrimination claims; Executive misconduct Environmental/Social: Oil spills; Labor exploitation; Pollution incidents |

| CYBER & TECHNOLOGY RISK1819 | The risk of losses from disruption or failure of IT systems, data breaches, ransomware attacks, or technology obsolescence. Increasingly distinct from general operational risk. | • Rapidly evolving threat landscape—new attack vectors constantly emerge • Control-dependent—pricing based on current security posture, not history • Insurance available—unlike most strategic risks, cyber can be insured • Industry-dependent—high-risk sectors (finance, healthcare) pay more • Improving controls reduce premiums—strong incentive alignment | Data Breaches: Hackers steal customer information; Personal data of millions exposed; Regulatory fines and lawsuits follow Ransomware Attacks: Criminals lock you out of systems; Demand payment to restore access; Business operations halt System Failures: Software bugs or aging infrastructure cause crashes; Website goes down; Payment systems fail DDoS Attacks: Website flooded with traffic, becomes inaccessible; Business loses revenue during attack Insider Threats: Disgruntled employee steals data; System administrator sabotages operations; Contractor misuses access |

Each of these types of risks attracts different prices. Here’s another table:

| RISK TYPE | DEFINITION | PRICING CHALLENGE | KEY INSIGHT |

|---|---|---|---|

| HAZARD RISK (Pure Risk)56 | The possibility of loss from natural events or accidents. The oldest, most intuitive kind of risk. | Relatively straightforward to price because: Historical data is abundant and reliable Frequency and severity are stable over time | Easiest to price. Insurers have vast datasets spanning centuries showing how often fires, floods, and accidents occur. This precision makes hazard risk the most competitively priced and cheapest form of risk insurance. |

| OPERATIONAL RISK78910 | The risk that your business’s internal machinery breaks down. Unlike hazard risk, it’s inherent to doing business—you can’t eliminate it, only manage it. Also cannot be diversified away. Defined by Basel II as: “Risk of loss from inadequate or failed internal processes, people and systems, or external events.” | • Real drivers (control quality, governance, employee skill) are hard to measure • Cannot use simple historical formulas • Basel II uses crude proxy: operational risk capital = percentage of gross income • Limited historical data compared to hazard risk • Outcomes are correlated across firms during crises | Cannot diversify away. When 100 banks all face the same operational risk (say, a payment system cyberattack), they all suffer simultaneously. This systemic nature makes operational risk expensive to accept and pricing it requires judgment, not just formulas. |

| FINANCIAL RISK111213 | Risk from changes in financial variables: credit defaults, price movements, or inability to access funds. Encompasses three subcategories. | • Models based on historical data miss tail risk (rare catastrophic events) • Correlation assumptions break during crises (2008 showed this) • Pricing assumes future resembles past • Volatile and difficult to predict | Impossible to price accurately at extremes. Financial risk is driven by market sentiment, which can shift suddenly. Models work 99% of the time but fail catastrophically in the 1% (like 2008), when many risks materialize simultaneously. |

| STRATEGIC RISK14 | Risk that your business strategy is wrong. Risk from strategic decisions and competitive threats that can derail long-term objectives. Highest impact, but low frequency. | • No historical data for “probability that our strategy fails” • Each strategic decision is somewhat unique • Cannot use formulas or actuarial tables • Outcomes depend on management judgment and execution • Extremely difficult to quantify in advance | Cannot be insured. Strategic risk is almost entirely uninsurable because each company’s strategy is unique. CEOs and boards must accept this risk as part of doing business. Pricing relies on scenario analysis and management judgment, not hard data. |

| COMPLIANCE & REGULATORY RISK15 | The risk that you violate laws, regulations, or internal policies, resulting in fines, legal action, or reputational damage. The regulatory environment is constantly changing. | • Probability of enforcement depends on regulator priorities (which change) • Penalties are often discretionary and unpredictable • New regulations create retroactive compliance challenges • Conflicting guidance from different regulators • Costs increase with regulatory tightening | Costs are rising fast. Regulators are increasingly aggressive, penalties are larger, and reputational consequences are severe. Organizations must continuously invest in compliance infrastructure (legal teams, compliance officers, audits) as a cost of doing business. |

| REPUTATIONAL RISK1617 | The risk that negative publicity damages your brand, eroding customer trust, investor confidence, investor perception, or ability to attract talent. One of the hardest risks to quantify. | • Stock price falls MORE than announced loss (2x for fraud, 1x for accidents) • 26% of company value is directly attributable to reputation (one study) • No standard pricing model • Very difficult to quantify until it happens • Historical data limited | Stock market values reputation more than we can measure. When a company announces a $1B fraud loss, stock price might fall 5% ($5B loss in value). The extra $4B is “reputational loss”—the market’s judgment that the company is now riskier. Yet most companies can’t quantify or insure this risk. |

| CYBER & TECHNOLOGY RISK1819 | The risk of losses from disruption or failure of IT systems, data breaches, ransomware attacks, or technology obsolescence. Increasingly distinct from general operational risk. | • Unlike hazard risk (stable data over decades), cyber threats evolve rapidly • Historical data is unreliable—new attack types didn’t exist 5 years ago • Pricing focuses on current security posture not past incidents • Rapidly changing insurance market (premiums spiked 80% in 2021-2022) • Standardization emerging (ISO 27001, NIST) | Pricing is behavior-based. Unlike traditional insurance (fixed premium regardless of actions), cyber insurance prices based on your current controls. Companies with firewalls, multi-factor authentication, and ISO 27001 certification pay ₹80,000/year. Those with weak security might pay ₹3,00,000 or be denied coverage. This creates powerful incentives to improve security. |

General principles of pricing risk

People react in different ways to risk. Some of us prefer the straight and narrow and others don’t think much of doing things that would be considered too risky by others- think of how some don’t mind skydiving, whereas others prefer their feet firmly on the ground. There are risks associated with both skydiving, and staying on the Earth, but different people like different things.

Therefore, risk can technically be transferred from one person to another. And this can be offered as a business service, for a price.

Now, before we go into this further, please understand that some risks can never be transferred- just that the effect of their impact can be mitigated. People will die, that is life. But by buying term insurance, we can ensure our families don’t suffer financial loss as well as the loss of our love and support. Similarly, living beings get sick- by purchasing health insurance we can just make sure we don’t face financial difficulties if we ourselves get sick in a way that costs a lot of money to fix. We are not transferring the death and decay, we are transferring the financial cost of these events.

1. The Formula2021

With that out of the way, when someone asks you to bear their risk, you charge them a price. That price is made up of several components:

Price of Risk = Expected Loss + Administrative Costs + Risk Loading + Profit Margin

Where:

- Expected Loss is simply: Probability × Consequence. If there’s a 2% chance of a ₹100,000 loss, the expected loss is ₹2,000.

- Administrative Costs are the cost of doing business. For an insurer, this includes underwriting (reviewing your application), policy servicing (managing your account), claims processing, and marketing. For a bank, it includes loan documentation, monitoring your creditworthiness, and collecting payments if you default.

- Risk Loading is the “insurance premium on the insurance premium.” It’s an extra charge you demand to accept the fact that reality might differ from your expectations. This is where variance becomes critical.22

- Profit Margin is what you keep as profit.

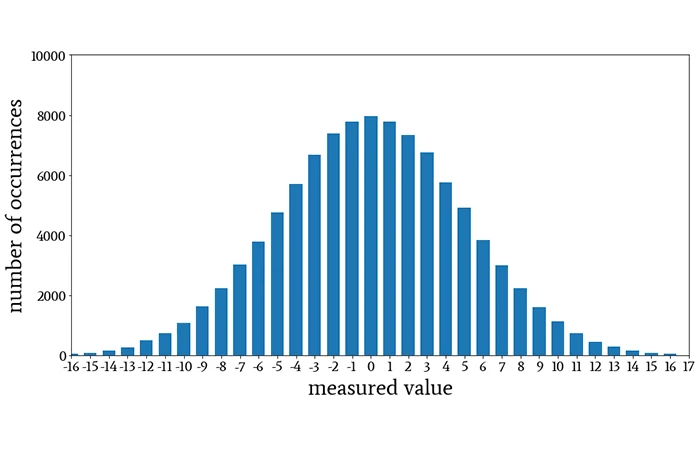

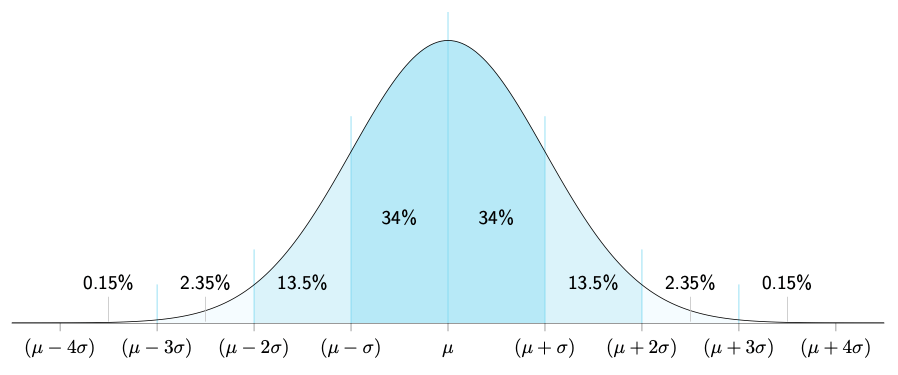

2. Variance

Variance is uncertainty about whether actual outcomes will match expected outcomes. As risk increases, variance often increases faster. Why? This happens because most people will fall closer to the middle of the normal distribution (discussed in the post linked at the beginning of the paragraph), but as risk increases, the number of people who are either that risky or are willing to take that risk are fewer and fewer (few will skydive, more will bungee jump, most will fly commercial). The fewer the number of people to whom a risk applies, greater the chances of variance (because the insurer has fewer people over whom to spread the risk). In other words, the law of large numbers works less effectively with small groups. With 1 million people, outcomes average out predictably, so let’s say you get the same or very similar number of claims every year. With 50 people, you might get zero claims one year and three claims the next—massive volatility.

I just want to be sure this is clear, so here is another example. Suppose two people pool their money every month, and decide that if one of them gets sick, the sick person can to use a certain percentage of the total money pooled (collected) by both of them to pay for the treatment. It is possible that for many years no one gets sick, but it is also possible that one (50%) of the total contributors or both (100% of the total contributors) get sick one day. On the other hand, in a pooled health insurance which has many contributors, say 1 million contributors, if 1 person gets sick, they are 1/1,000,000 of the total number of contributors (or 0.0001% of the pool- much, much less than 50%, right?).

Secondly, higher-risk individuals have more uncertain outcomes—meaning it’s harder to predict exactly what will happen. A skydiver faces multiple possible outcomes with varying probabilities: they could live unharmed, break bones, die from equipment failure, die from a heart attack mid-jump, or face other unpredictable complications. Each outcome has a different probability, making the overall risk calculation more complex. In contrast, a person simply walking on the ground faces far fewer potential causes of serious injury or death, so the range of possible outcomes (variance) is much narrower. Another way of looking at this is that a 30 year old healthy non smoker likely has fewer known causes of death historically than a 70 year old smoker.

This is why insurance premiums for risky people increase disproportionately:

- The insurer must hold more capital to protect against bad luck.

- A 30-year-old non-smoker with a 0.05% probability of death in a year might have a premium of ₹3,000.

- A 60-year-old smoker with a 1% probability of death (20x higher) doesn’t pay 20x the premium (₹60,000). They pay 50x+ the premium (₹1,50,000 or more) because:

- The absolute expected loss is 20x higher.

- The variance around that expected loss is also much higher (more uncertainty about outcomes).

Insurers also worry about correlation—the risk that many claims happen simultaneously. A life insurer pricing individual deaths assumes they’re independent. But if a pandemic strikes, many policyholders might die at once. This correlation risk requires extra capital, adding to the risk loading.2324

Uncertainty

When an insurer lacks information about a particular risk, they will charge more for it, because they do not know how potent the risk is, or how frequently it occurs.2526

Suppose a bank is deciding whether to lend to two borrowers, both with self-reported income of ₹10 lakhs per year.

- Borrower A: A salaried employee with 10 years of bank statements, tax returns, and employer verification. The bank has rich information about their actual, consistent income.

- Borrower B: A self-employed consultant with only 2 years of tax returns. Income has varied between ₹5 lakhs and ₹15 lakhs per year. The bank’s uncertainty about their true ability to repay is high.

Both might have estimated default probabilities of, say, 2% based on available data. But the bank will charge Borrower B a higher interest rate, not because their actual default probability is higher, but because the bank’s uncertainty about that probability is higher.

This principle explains all of the following:

- Businesses in developed countries with strong financial reporting get cheaper capital than those in developing countries with weak disclosure.2728

- Companies listed on stock exchanges get better rates than private companies (more transparency).29

- Established firms in regulated industries get better rates than startups in emerging sectors.30

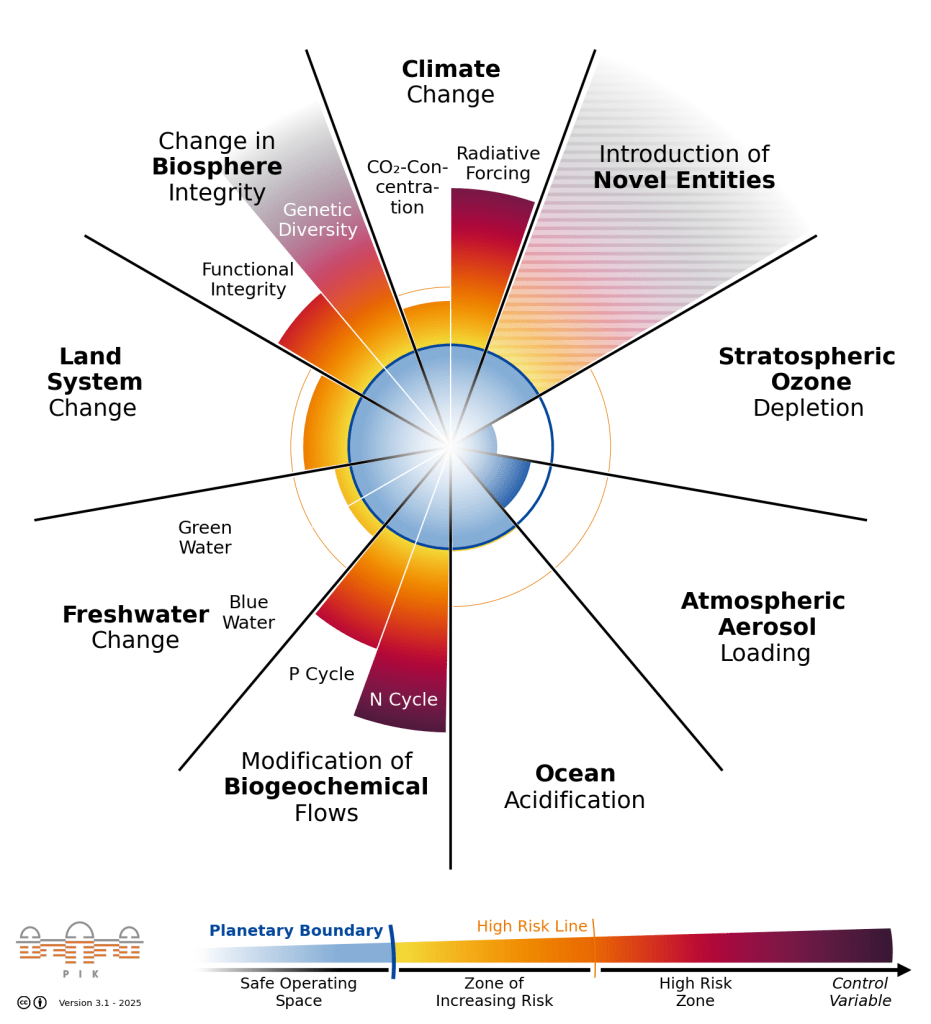

Therefore, the more standardised and measurable a risk, the cheaper it is to price and the lower the price demanded. Insurance for hazard risk (with centuries of actuarial data) is cheaper relative to coverage than climate insurance (with only decades of data).31 VaR models for market risk are widely accepted because market prices are observable. But there’s no standard model for reputational risk, so it’s not widely insured.32

This creates a system where:

- Predictable, measurable, insurable risks get priced accurately and competitively.

- Unpredictable, hard-to-measure risks are either:

- Not insured at all (like most strategic risk).

- Priced with huge margins because of the uncertainty (like reputational risk).

This is a profound source of inefficiency in capital allocation. Risks that are easiest to measure and quantify get the cheapest pricing and most capital. Risks that are hardest to measure—sometimes the ones that matter most—get starved of capital or don’t get priced at all.

A problem that has emerged from this is that historical models can simply not price tail risks (risks at the corners of normal distributions). An area this affects is climate risk, and its pricing.3334 A different example many of us lived through was the 2008-09 subprime financial crisis. In 2008, banks had calculated that simultaneous mortgage defaults across their portfolio should happen once every few thousand years. Yet it happened in 2007-2008. Why?35

The models went with historical data and assumed:

- Housing prices wouldn’t decline nationwide (they always went up historically).36

- Unemployment wouldn’t spike across industries simultaneously.37

- Banks wouldn’t stop lending to each other.37

But all three happened together, creating a “perfect storm” that the models had assigned nearly zero probability. The tail risk was real; the pricing was wrong. Financial institutions now conduct stress testing—asking, “What if housing prices fell 30%? What if unemployment doubled? What if credit markets froze?“—precisely because historical models miss these scenarios.

Thus, if a financial advisor saying “stocks haven’t crashed in 50 years, so the probability is very low” is engaging in tail risk underpricing, and yet, we do still use the method to price some kinds of risk. The next section talks about this and other methods of risk pricing.

Pricing different risks

Methodology 1: The Actuarial Approach (Hazard Risk)4

Insurance companies maintain vast databases of historical claims. For life insurance, they track millions of deaths by age, gender, health status, and lifestyle. For home insurance, they track fire and weather damage claims by location and property type. For auto insurance, they track accidents by driver age, vehicle type, and location. From this data, actuaries calculate frequency (how often does the event occur?) and severity (how much damage when it does?). The math relies on:

- Having huge sample sizes (law of large numbers).

- Accurate historical data (actuarial tables updated constantly).

- Stable risk—the probability of death doesn’t change dramatically over time.

- Why this works: Hazard risk has all these properties. Insurers have massive datasets, deaths are well-documented, and the probability of death doesn’t swing wildly year to year.

- Why it fails: When underlying assumptions break, actuarial models fail. During COVID-19, mortality rates spiked unexpectedly, and life insurers faced massive losses. The historical tables became temporarily unreliable.

Methodology 2: The Credit Approach (Financial Risk)383940

Banks estimate the Probability of Default (PD) of a borrower. This comes from:

- Credit ratings (developed from historical default rates of companies with similar characteristics).

- Credit scores (statistical models predicting default probability).

- Loan characteristics (collateral, loan-to-value ratio, term length).

They also estimate Loss Given Default (LGD)—how much money the bank recovers if the borrower defaults. If a borrower defaults on a ₹100 lakh loan backed by ₹60 lakhs of collateral, the LGD is 40%.

The interest rate spread (the premium above the risk-free rate) is then set approximately as:

Interest Rate = Risk-Free Rate + (PD × LGD + Risk Loading) + Liquidity Premium + Other Premiums41

Other premiums:

| Risk Premium | Explanation |

|---|---|

| Credit Risk Premium42 | Compensation for the probability that the borrower defaults and the amount the lender loses if they do (PD × LGD) |

| Liquidity Premium43 | Compensation for holding an asset that is difficult to sell quickly (e.g., corporate loans are less liquid than government bonds) |

| Inflation Risk Premium44 | Compensation for uncertainty about future inflation; if inflation is higher than expected, the real value of repayments falls |

| Term Premium44 | Compensation for lending money for longer periods; longer loans have more uncertainty about interest rates and borrower circumstances |

| Currency Risk Premium45 | Compensation for the risk that exchange rates move unfavorably; relevant when borrowing in a foreign currency |

| Sovereign Risk Premium46 | Compensation for political and economic instability in the borrower’s country; reflects country-level risk beyond individual borrower risk |

| Regulatory Risk Premium47 | Compensation for the risk that changes in laws or regulations will harm the lender’s position |

| Prepayment Risk Premium48 | Compensation for the risk that the borrower repays early (often when interest rates fall), causing the lender to reinvest at lower rates |

| Concentration Risk Premium49 | Compensation for lending a large amount to a single borrower or sector, which increases the lender’s exposure |

| Call Risk Premium50 | Compensation for the risk that the bond issuer redeems the bond early, leaving investors with reinvestment risk |

| Event Risk Premium51 | Compensation for the risk of specific one-off events (mergers, leveraged buyouts, natural disasters) that suddenly change creditworthiness |

| Convertibility Risk Premium48 | Compensation for the risk that capital controls or currency restrictions prevent conversion to foreign currency |

| Transfer Risk Premium52 | Compensation for the risk that a government blocks or restricts cross-border payments, even if the borrower wants to pay |

- Why this works: Credit markets are large and competitive. Banks have decades of default data. Collateral can be valued. PD and LGD can be estimated with reasonable accuracy.

- Why it fails: When credit conditions change suddenly (as in 2008), the relationship between PD and actual defaults breaks. A borrower who seemed safe (PD 1%) might suddenly have a 20% probability of default if the economy collapses. This is called “correlation risk”—risks that seemed independent are actually correlated, and they all materialize simultaneously.

Methodology 3: Value at Risk (Market Risk)5354

When investment banks, traders, and portfolio managers hold stocks, bonds, or other financial assets, they face a fundamental question: “How much could we lose on a bad day?” Value at Risk (VaR) answers this question: “What’s the maximum loss I might suffer with 95% confidence over a given time period (usually one day)?”

Suppose you hold a portfolio of Indian stocks worth ₹1 crore. You want to know your VaR at 95% confidence for one day.

Here’s how you calculate it:

- Gather historical data: Look at how much your portfolio’s value changed each day over the past 5 years (roughly 1,250 trading days).

- Calculate daily returns: On some days, your portfolio gained 2%. On others, it lost 3%. Most days, changes were small (±0.5%).

- Rank all the losses: Sort all the daily changes from worst to best.

- Worst day: -10% (₹10 lakh loss)

- 95% of days: losses were less than -7%

- Typical days: ±1%

- Identify the 95th percentile: Find the loss that was exceeded on only 5% of days (the worst 5% of outcomes). Let’s say this was -7%.

Your VaR is ₹7 lakhs.

What this means in plain English:

“Based on historical patterns, we are 95% confident that on any given day, we won’t lose more than ₹7 lakhs. But on 1 out of every 20 days (5% of the time), we might lose more than this—possibly much more.”

How Banks Use VaR:

Banks use VaR for three main purposes:

- Setting risk limits: “No trader can hold a position with VaR greater than ₹50 lakhs.”

- Allocating capital: “This trading desk’s portfolio has VaR of ₹2 crore, so we must set aside ₹2 crore in capital to cover potential losses.”

- Pricing risk: “We need to earn at least 10% return on our ₹2 crore capital (₹20 lakhs per year), so the portfolio must generate returns higher than the risk-free rate by at least this amount.”

- Why this works: Market prices are observable and historical data is abundant. VaR is simple to calculate and widely understood.

- Why it fails spectacularly: VaR assumes the future resembles the past. When it doesn’t—when a “tail risk” event occurs that’s much worse than historical data suggested—VaR provides false confidence. Black swan events—outliers far beyond historical norms—happen more often in real markets than VaR predicts. This is why sophisticated risk managers now conduct stress tests: “What if housing fell 30%? What if correlation across assets went to 1.0 (everything moves together)?” These scenarios often have probabilities that can’t be estimated from historical data.

Methodology 4: Reputational Risk Quantification16175556

Reputational risk is one of the hardest to price because reputation damage is:

- Invisible until it happens

- Subjective (how much is brand trust worth?)

- Interconnected (affects customers, employees, investors, suppliers simultaneously)

Yet we know reputation has enormous value because research shows that roughly 26% of a company’s market value is directly attributable to its reputation.57 So how do we price something intangible?

The Stock Price Method: When a company announces a major negative event (fraud, scandal, product failure), the stock price falls. But often, the stock price falls more than the announced financial loss. The difference is the market’s estimate of reputational damage.

Reputation Risk Quantification Models that try to systematically price reputation risk:

- Identify reputation threats: Product recalls, scandals, poor earnings, social media backlash

- Estimate frequency: How often does each type of event happen in this industry?

- Model financial impact: Customer loss, revenue decline, employee turnover costs

- Quantify total effect: Project impact on profits over 3-5 years

However, unlike life insurance (centuries of death data) or credit risk (decades of default data), reputation damage is:

- Context-dependent: The same scandal might destroy one company but barely hurt another

- Hard to predict: Social media can amplify or diminish reputational harm unpredictably

- Self-reinforcing: Initial reputation damage can trigger customer flight, making things worse

This is why most companies don’t buy reputation risk insurance:

- Insurers can’t agree on how to price it

- Coverage is extremely expensive when available

- Policies have many exclusions

So reputation risk remains largely self-insured—companies must manage it through strong governance, ethical culture, and crisis response planning, but they can’t transfer it to an insurer the way they can with fire risk or credit risk.

Methodology 5: The Security Audit Approach (Cyber Risk)585960

Historically treated as operational risk, cyber risk is now often priced separately. Unlike traditional hazard risk (based on decades of historical data), cyber insurance prices risk based on current security posture. Insurers conduct security audits assessing:

- Business context: Industry (finance = higher risk), revenue size, number of employees, data sensitivity.

- Technical controls: Firewalls, intrusion detection, endpoint protection, multi-factor authentication.

- Process maturity: Patch management, vulnerability assessment, incident response plans.

- Compliance: Certifications like ISO 27001 or NIST Cybersecurity Framework.

- Training: Employee security awareness, phishing simulations.

Unlike traditional insurance (where you pay a fixed premium regardless of your actions), cyber insurance creates incentive alignment. Companies are rewarded for improving security. This is why cyber premiums vary so widely—from ₹80,000 to ₹3,00,000 for similar coverage, depending on security posture, so if the insured company becomes better prepared, its insurance premium can go down. The industry is evolving rapidly. As cyber threats evolve, pricing models are updated. Premiums spiked 80% in 2021-2022 (due to ransomware explosion) but have stabilized as companies improved controls and insurers refined models.

Methodology 6: Scenario Analysis (Strategic Risk)6162

Strategic risk is fundamentally different because:

- Can’t be insured—no insurer will cover “your strategy might be wrong”

- No historical data exists for “probability our specific strategy fails”

- Each decision is unique—your market entry isn’t comparable to another company’s

- Outcomes depend on management judgment, execution capability, and competitor actions

Instead of formulas, companies use scenario analysis—imagining multiple possible futures and testing strategy robustness across them.

The Process:

Step 1: Define the Current Strategy: Example: An e-commerce company currently selling books and electronics is considering expanding into furniture delivery.

Step 2: Imagine Alternative Futures (Scenarios): Scenario planning typically develops 3-5 scenarios representing different ways the future might unfold. Assign probabilities to different scenarios and how much loss your company would bear, for example, a company may have a scenario that

Step 3: Calculate Expected Value (With Huge Caveats).

Example:

Scenario A: “Competitive Onslaught”

- 3 major competitors enter within 18 months

- Price war erupts, margins drop 20%

- Company loses ₹50 crore over 3 years

- Probability: 60%

Scenario B: “Logistics Nightmare”

- Delivery complexity exceeds expectations

- High return rates (15%)

- Company loses ₹30 crore

- Probability: 40%

Scenario C: “Weak Demand”

- Market adoption slower than projected

- Company loses ₹80 crore

- Probability: 30%

Scenario D: “Success”

- Market responds positively

- Company gains ₹150 crore

- Probability: 20%

Note: Probabilities don’t need to sum to 100% because scenarios aren’t mutually exclusive—multiple scenarios could occur simultaneously (e.g., you could face both competitive pressure AND logistics challenges).

Expected Outcome = (Probability of Scenario × Impact)

= (0.6 × -₹50cr) + (0.4 × -₹30cr) + (0.3 × -₹80cr) + (0.2 × +₹150cr)

= -₹30cr – ₹12cr – ₹24cr + ₹30cr

= -₹36 crore expected loss

- Why this works: Strategic risk isn’t insurable. There’s no historical data on “furniture market entry outcomes” for this specific company. Each strategic decision is somewhat unique. Organizations can’t buy insurance for strategic risk; they must manage it through planning, contingency analysis, and management judgment.

- Why it fails: Scenarios often miss the most important surprises. In 2020, COVID-19 wasn’t in most companies’ scenarios. When reality diverges from scenarios, organizations must adapt on the fly. This is why CEOs, not risk managers, bear ultimate responsibility for strategic risk.

Sources

- Life Actuarial (A) Task Force – APF CSO VM-M (2015)

- Gender and Smoker Distinct Mortality Table Development – Ghosh & Krishnaswamy

- Socioeconomic inequality in life expectancy in India – BMJ Global Health

- Big Data and the Future Actuary – Society of Actuaries

- What Is Pure Risk? – Investopedia

- Types of Risks—Risk Exposures – FlatWorld (Baranoff)

- Operational Risk – Supervisory Guidelines for the AMA – BIS (BCBS196)

- Module 3 – Operational Risk Guidance – GFSC

- Operational Risk – Basel 3.1 Implementation – Bank of England

- Operational Risk Management: The Ultimate Guide – MetricStream

- Credit risk, market risk, operational risk and liquidity risk – IndianEconomy.com

- Types of Financial Risks – Fiveable

- Categories of Risk – OCC

- Categories of Risk – OCC (duplicate link)

- Operational Risk Management: The Ultimate Guide – MetricStream (duplicate link)

- The Market Reaction to Operational Loss Announcements – Boston Fed

- Reputational Risk – Does it really Matter Against Financial Risk? – GARP

- Cyber Insurance in India – DSCI

- Reality check on the future of the cyber insurance market – Swiss Re

- Expense Load – IRMI

- Chapter 7 – Premium Foundations – Loss Data Analytics (open text)

- The Theory of Insurance Risk Premiums – Kahane (ASTIN / CAS)

- A review of capital requirements for pandemic risk – BIS FSI Briefs

- An alternative approach to manage mortality catastrophe risks under Solvency II

- Recursive correlation between voluntary disclosure, cost of capital, and firm value

- Cost of capital and earnings transparency – ScienceDirect

- Disclosure and cost of equity capital in emerging markets – ScienceDirect

- Effect of integrated reporting quality disclosure on cost of equity capital

- Going rate: How the cost of debt differs for private and public firms – Notre Dame

- Rate of Return Regulation Revisited (utilities) – Haas Berkeley working paper

- Climate Change Risk Assessment for the Insurance Industry – Geneva Association

- Assessing the Risks of Insuring Reputation Risk – Actuaries / CRO Forum

- Tailoring tail risk models for clean energy investments – Nature HSS Communications

- Climate Change Risk Assessment for the Insurance Industry – Geneva Association (duplicate link)

- Incorrectly Applying Default Correlation Theory: Causes of the Subprime Mortgage Crisis – NHSJS

- The Central Role of Home Prices in the Current Financial Crisis – Brookings

- Risk Management Lessons from the Global Banking Crisis – SEC / FSB

- Expected Loss (EL): Definition, Calculation, and Importance – CFI

- Loss Given Default (LGD) – Wall Street Prep

- Banking Risk Management (PD, EAD, LGD) – Roopya

- An Empirical Decomposition of Risk and Liquidity in Nominal and Inflation‑Indexed Yields – NBER

- The Hidden Risks of Private Credit – and How to Spot Them – GARP

- What Is Risk Premia – GreenCo ESG

- Interest Rate as the Sum of Real Risk‑free Rate and Risk Premiums – AnalystPrep

- Categories of Risk – OCC (duplicate link)

- Decomposing Government Yield Spreads into Credit and Liquidity Components – Danmarks Nationalbank

- Cost of Capital and Capital Markets: A Primer for Utility Regulators – NARUC

- Portfolio Risk Management & Investment – ETDB

- Concentration Risk on the Buy Side of Credit Markets – CFA Institute Blog

- Climate change financial risks: Implications for asset pricing and risk management – ScienceDirect

- Event Risk Premia – Sebastian Stoeckl (slides)

- Transfer of Risk – Investopedia

- Value at Risk (VaR) Models – QuestDB

- Introduction to Value at Risk (VaR) – QuantInsti

- Reputational Risk Quantification Model – WTW

- Reputational risk – the elephant in the room – Airmic

- $13.8 TRILLION IN PLAIN SIGHT – The Reputation Driving S&P 500 Value – Echo Research

- Cybersecurity Insurance Audit – Insureon

- Preparing for Cyber Insurance Audits with Compliance Scanners – ConnectSecure

- How to Reduce your Cyber Liability Insurance Premium – Databrackets

- Scenario Analysis Explained – Investopedia

- Scenario Analysis: Definition, Process, and Benefits – NetSuite